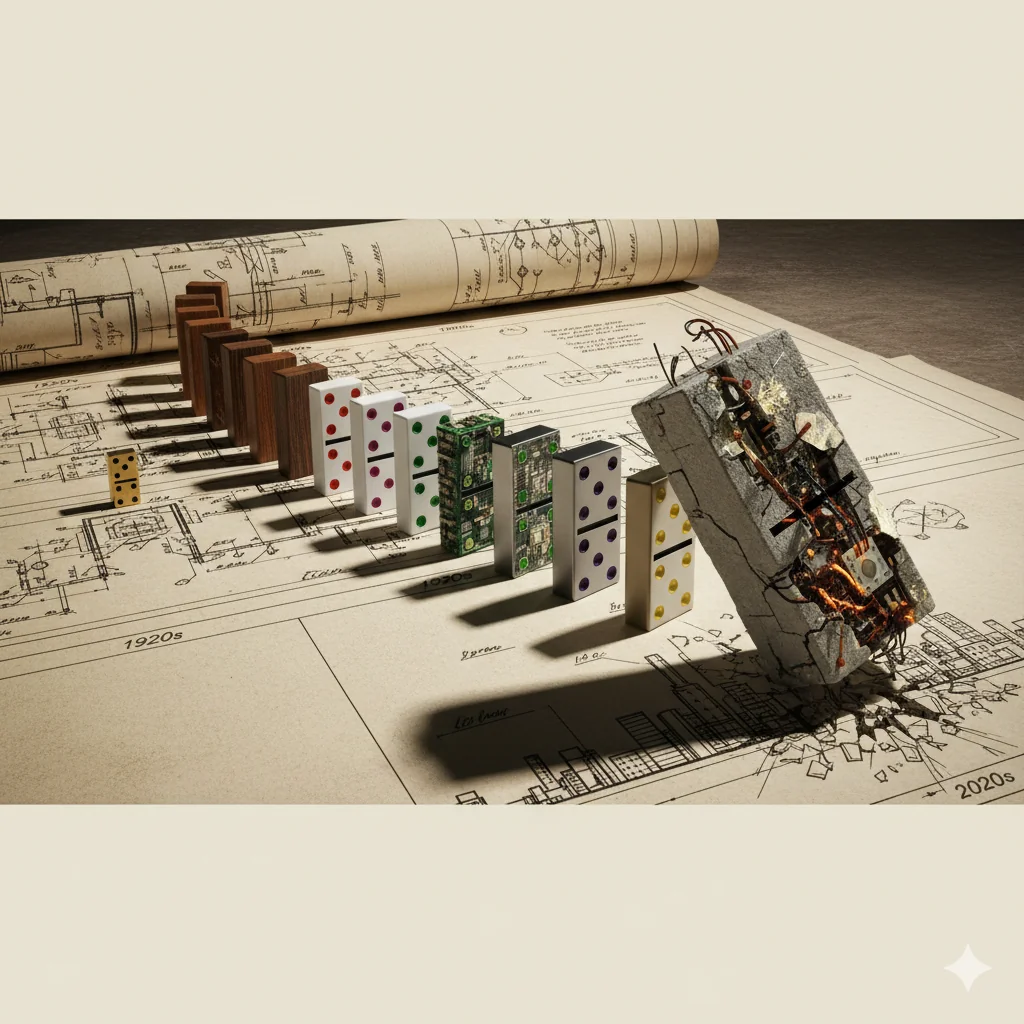

The Domino Effect: How a Single Design Assumption Can Cascade Through 50 Years of Operations

The Domino Effect: How a Single Design Assumption Can Cascade Through 50 Years of Operations

The forensic engineering report was 847 pages long. But the finding that mattered appeared on page 23:

“Critical design assumption, established during 2003 feasibility study: Tailings consolidation behavior modeled using permeability coefficient k = 1×10⁻⁶ cm/s based on laboratory testing of representative samples.”

“Actual field permeability, determined from 20 years of piezometer data and back-analysis: k = 3×10⁻⁷ cm/s. Tailings consolidated three times slower than predicted.”

That single number—one order of magnitude different from reality—had cascaded through everything:

- Water management system undersized (designed for faster drainage)

- Freeboard calculations optimistic (assumed lower water content)

- Raise schedule compressed (assumed faster consolidation between lifts)

- Stability analyses unconservative (lower pore pressures assumed)

- Closure timeline unrealistic (drainage assumed complete sooner)

- Cost estimates wrong (more operational adjustments needed) One lab test result from Year Zero, embedded in the Design Basis Report, influenced every subsequent decision for two decades. And it was wrong. Not because anyone was negligent. Not because the engineer was unqualified. But because a single data point from limited samples became gospel, never questioned, never validated against actual field behavior.

This story (composite of several real incidents) illustrates something GISTM hints at but most operations underestimate:

Design assumptions made early in a project’s life cast long shadows.

They influence operations decades later. They constrain future options. They compound through interconnected systems. And they’re often invisible—embedded in documents nobody revisits, taken for granted, treated as fact rather than assumption.

GISTM Requirement 4.8 mandates that the EOR “update the DBR every time there is a material change in the design assumptions, design criteria, design or the knowledge base.”

But here’s what this requirement doesn’t say:

How do you know when an assumption has changed—or worse, was wrong from the start—if nobody’s tracking assumptions separately from conclusions?

Let’s trace how design assumptions cascade through the lifecycle of a tailings facility, and what happens when they’re wrong.

The Genesis: How Assumptions Are Born

The Necessity of Assumptions

Engineering design requires assumptions. Always. Because:

- You’re designing for future conditions that don’t exist yet

- You have limited data from limited investigations

- You must predict behavior of complex materials over decades

- You’re working with natural variability and uncertainty

- You have budget and timeline constraints on investigations

Assumptions aren’t bad. They’re necessary.

But they become dangerous when:

- They’re not explicitly identified as assumptions

- They’re not documented with their basis and uncertainty

- They’re not validated against actual performance

- They’re not updated when evidence suggests they’re wrong

- They become invisible over time

How Early-Stage Assumptions Get Made

How Early-Stage Assumptions Get Made

During prefeasibility and feasibility (Years -5 to -2):

Limited data forces assumptions:

- Maybe 10-20 boreholes across site

- Lab testing on limited samples

- Short-term monitoring (1-2 years)

- Regional data for climate, seismicity, hydrology

- Analogues from similar facilities or materials

Time pressure forces assumptions:

- “We need the feasibility study in 18 months”

- “We can’t afford another drilling campaign”

- “Use the data we have and make reasonable assumptions”

Economic pressure forces assumptions:

- Conservative assumptions increase costs

- Optimistic assumptions improve project economics

- Pressure to make project financially viable

Real example of how this plays out:

Feasibility study for mine in South America:

Question: What’s the shear strength of tailings for stability analysis?

Data available: Five triaxial tests on samples from similar deposit in same region

Test results:

- Friction angle (φ’): 32°, 29°, 35°, 31°, 28°

- Mean: 31°

- Standard deviation: 2.7°

Design assumption made: φ’ = 28° (conservative, using lowest value from limited data)

Documented in DBR: “Tailings shear strength characterized by friction angle of 28° based on laboratory testing and regional experience.”

What’s invisible:

- Only 5 tests (small sample size)

- From different deposit (not actual tailings)

- Tested at different moisture/density than will occur in field

- Uncertainty in how representative these samples are

The assumption enters the design:

- Stability analyses use φ’ = 28°

- Factors of safety calculated

- Facility geometry determined

- Construction methods specified

- Costs estimated

Over time, the assumption becomes fact:

- Year 1: “Design assumed φ’ = 28°”

- Year 5: “Design is based on φ’ = 28°”

- Year 10: “The tailings have φ’ = 28°”

The uncertainty disappears. The assumption becomes truth.

The First Cascade: Design Phase Interconnections

The First Cascade: Design Phase Interconnections

Design isn’t linear. It’s a web of interconnected assumptions.

When one assumption changes, it triggers changes throughout the design.

Example: The Permeability Cascade

Assumption made: Tailings permeability k = 1×10⁻⁶ cm/s

This assumption affects:

1. Pore Pressure Dissipation Modeling

- Determines: How quickly water drains after deposition

- Influences: Predicted piezometric surface location

- Impacts: Stability calculations (lower pore pressure = higher stability)

2. Consolidation Settlement Predictions

- Determines: How quickly tailings consolidate under self-weight

- Influences: Timeline for placing subsequent lifts

- Impacts: Construction schedule and ultimate facility geometry

3. Seepage Analysis

- Determines: How much water seeps through dam and foundation

- Influences: Seepage collection system design

- Impacts: Environmental management and water balance

4. Water Management System Design

- Determines: How much water remains in facility vs. drains

- Influences: Pond size, freeboard requirements, reclaim capacity

- Impacts: Operational flexibility and water balance

5. Beach Slope Predictions

- Determines: How far tailings flow before depositing

- Influences: Deposition strategy and required beach length

- Impacts: Facility footprint and stability margins

6. Closure Design

- Determines: How long until excess water drains post-closure

- Influences: Closure timeline and water quality predictions

- Impacts: Closure cost estimates and timeline

Now imagine that assumption is wrong by a factor of 3 (actual permeability is lower).

Every single downstream calculation is affected.

Real example from copper mine in Chile:

Design assumption (2005): k = 5×10⁻⁶ cm/s based on lab tests

Actual field behavior (2010-2015): Back-calculation from piezometers suggested k = 8×10⁻⁷ cm/s

Implications discovered through dam safety review:

- Pore pressures: Running higher than predicted (explains some monitoring anomalies that had been concerning)

- Consolidation: Happening slower (explains why settlement monuments showing less settlement than predicted)

- Beach slopes: Steeper than predicted (materials not draining as fast, staying saturated longer)

- Freeboard: Less than planned (pond larger because less drainage into beach)

- Stability: Factors of safety lower than calculated in design (still acceptable, but less margin)

- Closure: Will take longer than estimated (water won’t drain as quickly)

The response required:

- Updated stability analyses with actual permeability

- Revised water management strategy

- Modified deposition practices

- Enhanced monitoring in some areas

- Updated closure plan and cost estimates

- Revised DBR documenting actual vs. assumed permeability

Cost of discovering and correcting: ~$8M in investigations, redesign, and operational modifications

Cost if not discovered until later: Potentially much higher, or catastrophic if stability margins were critical

The Second Cascade: Operations Phase Adaptations

The Second Cascade: Operations Phase Adaptations

Operations must work with the facility as it actually exists, not as it was designed to be.

When reality differs from design assumptions, operations adapt—sometimes in ways that create new risks.

Adaptation Pattern 1: Informal Workarounds

Scenario: Design assumed tailings would consolidate enough to allow raises every 18 months.

Reality: Consolidation slower, settlements not complete by scheduled raise.

Design-compliant response: Delay raise until consolidation complete, accept production impact

Actual response (often): Proceed with raise on schedule, reasoning “settlements aren’t that much behind prediction, probably fine”

What this creates: Deviation from design intent, potentially softer foundation than designed for, increased risk

Why it happens: Production pressure, cost pressure, rationalization (“we’ve done it before and nothing bad happened”)

Adaptation Pattern 2: Changing Operations to Compensate

Scenario: Design assumed specific beach slopes based on material properties.

Reality: Beach slopes steeper than predicted (material doesn’t flow/drain as assumed).

Adaptations made:

- Reduce deposition rates (try to get shallower slopes)

- Change discharge points more frequently

- Use more deposition points simultaneously

- Install more spigots than designed

- Modify tailings delivery system

Each adaptation has costs and potentially creates new risks:

- More complex operations

- Higher operating costs

- Equipment not designed for modified use patterns

- Procedures not written for actual practices

- Increased staff time and training needs

Real example from gold mine in Nevada:

Design assumption: Single-point discharge with natural beach development would achieve 1-2% slopes

Reality: Single-point discharge created 3-4% slopes (tailings not as fluid as assumed)

Adaptations over 10 years:

- Added 12 additional spigot discharge points (not in original design)

- Developed complex rotation schedule for deposition

- Modified pipework system repeatedly

- Created extensive procedures for spigot management

- Hired additional operators to manage system

Total cost of adaptations: >$15M over facility life

Alternative if caught early: Might have redesigned deposition system for $3-5M during construction

The lesson: Adapting to wrong assumptions is often more expensive than getting assumptions right—but costs are spread over time and become “just the way we operate.”

Adaptation Pattern 3: Enhanced Monitoring to Compensate

Adaptation Pattern 3: Enhanced Monitoring to Compensate

Scenario: Design assumptions about material behavior have higher uncertainty than ideal.

Response: Install extra monitoring to verify actual behavior and provide early warning if concerning.

This is appropriate risk management—but it has implications:

More monitoring means:

- Higher capital costs (instruments)

- Higher operational costs (reading, maintaining, analyzing data)

- More data to manage and interpret

- Potentially more false alarms or ambiguous signals

- More engineer time required for analysis

Real example from iron ore mine in Australia:

Design assumption: Foundation would settle uniformly under facility loading

Uncertainty: Foundation properties variable (karst features possible but not fully characterized)

Risk management: Extensive monitoring network (40+ settlement monuments, 25+ inclinometers, 30+ piezometers) vs. typical facility of similar size (20 monuments, 10 inclinometers, 12 piezometers)

Cost: Additional $2M for instrumentation, $300K/year for enhanced monitoring and analysis

Value: Detected differential settlement early in operations, enabled operational adjustments preventing potential stability issues

Justified: The enhanced monitoring compensated for geologic uncertainty that couldn’t be eliminated through investigation

But notice: This is ongoing cost, every year, for the life of the facility, because the design assumption had high uncertainty

The Third Cascade: Regulatory and Stakeholder Implications

The Third Cascade: Regulatory and Stakeholder Implications

Design assumptions affect more than engineering—they shape regulatory approvals and stakeholder expectations.

Environmental Impact Predictions Based on Assumptions

Design assumptions determine:

- Predicted seepage volumes and quality

- Estimated air quality impacts (dust from beach)

- Water balance predictions

- Closure timeline and environmental trajectory

These predictions appear in:

- Environmental impact statements

- Permit applications

- Regulatory approvals

- Community consultations

- Project commitments

When reality differs from assumptions:

Scenario 1: Actual impacts less than predicted

- Generally fine (though may have over-designed mitigation)

- Rarely causes problems

Scenario 2: Actual impacts greater than predicted

- Regulatory non-compliance possible

- Community concern and trust erosion

- Pressure to implement additional mitigation

- Potential for permit modifications (expensive, time-consuming)

Real example from mine in Canada: Real example from mine in Canada:

Environmental assessment (2008) predicted:

- Post-closure: Excess water in facility would drain within 5 years

- Seepage water quality would improve to discharge standards within 10 years

- Active water treatment needed for 5-10 years post-closure

Basis: Consolidation and geochemical modeling based on lab tests

Reality discovered through operational monitoring (2015-2020):

- Consolidation much slower than predicted (permeability lower than assumed)

- Geochemical reactions slower than lab tests suggested (temperature effects not captured)

- Likely water treatment needed for 20-30 years, possibly longer

Consequences:

- Need to revise closure plan and regulatory approvals

- Significantly higher closure cost estimates ($40M additional NPV)

- Community concern: “You said 10 years, now you’re saying 30+?”

- Regulator questions: “What else is different from what you predicted?”

The cascade: Wrong material property assumption → Wrong closure timeline prediction → Regulatory and social license implications

Stakeholder Communication Based on Assumptions

Stakeholder Communication Based on Assumptions

During project development, stakeholders are told:

- “The facility will be X meters high”

- “Construction will take Y years”

- “Closure will be completed Z years after mining ends”

- “Post-closure monitoring needed for A years”

These communications are based on design assumptions.

When assumptions prove wrong and plans change:

Community perspective: “They lied to us” or “They don’t know what they’re doing”

Reality: Assumptions changed based on actual data, but explaining this is challenging

The trust erosion compounds:

- First change: “Okay, they’re learning”

- Second change: “They don’t seem certain”

- Third change: “Why should we believe anything they say?”

Real example from community engagement:

Mine presented design (2010): “Facility will be 60m high, footprint 150 hectares, operate for 15 years”

Revision 1 (2015): “Actually, consolidation slower than expected, need to expand footprint to 180 hectares to maintain 60m height”

- Community reaction: Concern about additional land use, questions about what else might change

Revision 2 (2020): “Mine life extended, facility will now be 75m high and operate for 22 years”

- Community reaction: Significant opposition, trust severely eroded, lengthy approval process for amendments

The technical reality: These changes were appropriate responses to actual data and economic conditions

The social reality: Each change reinforced perception of uncertainty and weakened trust

The lesson: Early design assumptions have social and political consequences that extend far beyond engineering

The Fourth Cascade: Economic and Financial Implications

The Fourth Cascade: Economic and Financial Implications

Wrong assumptions cost money. Sometimes lots of money.

Capital Cost Escalation

Design assumptions determine:

- Facility geometry

- Materials quantities

- Construction methods

- Equipment requirements

- Timeline

When assumptions prove wrong during construction:

Example from facility under construction:

Assumption: Foundation bedrock encountered at depth of 5-8m

Reality: Bedrock irregular, some areas 15m deep

Implications:

- Additional excavation required (extra 120,000 m³)

- Increased backfill quantities

- Modified drainage system design

- Extended construction schedule

- Cost overrun: $18M (35% of foundation budget)

Root cause: Limited geotechnical investigation during design (25 boreholes across 200-hectare site) led to assumption of more uniform conditions

Operating Cost Escalation

Operating Cost Escalation

Design assumptions determine operational efficiency.

Real example from nickel mine in Canada:

Design assumption: Tailings would settle and consolidate sufficiently that reclaim water could be drawn from supernatant pond without excessive solids entrainment

Reality: Consolidation much slower, supernatant water had higher suspended solids than predicted

Consequences:

- Reclaim water quality affected downstream processes

- Had to add clarification system (not in original design): $4M capital

- Higher operating costs for water treatment: $800K/year

- Reduced water recovery (had to discharge more to maintain quality): ~$500K/year in lost value

- Total 10-year impact: >$13M

Alternative timeline:

- If assumption validated during first year of operations: Could have installed clarification before it impacted downstream processes: $4M

- If assumption correct in design: System would have worked as intended: $0

The cost of wrong assumptions compounds over operational life.

Closure Cost Escalation

Closure Cost Escalation

This is where wrong assumptions really bite.

Because closure costs are:

- Far in the future (decades away)

- Estimated with high uncertainty

- Based on compounding assumptions about how facility will perform

- Financially secured (bonded) based on those estimates

- Subject to regulatory scrutiny

Common pattern:

Initial closure cost estimate (prefeasibility): $15M

- Based on assumptions about consolidation rate, drainage timeline, water quality evolution, revegetation success

Updated estimate (5 years into operations): $28M

- Consolidation slower than assumed

- Water quality trajectory different than predicted

- Additional monitoring needed longer than planned

Updated estimate (15 years into operations): $52M

- Post-closure water treatment needed much longer

- Climate change impacts requiring design modifications

- Regulatory standards evolved

Updated estimate (at closure): $89M

- Actual conditions require more extensive interventions than predicted

- Unexpected geochemical behavior

- Longer monitoring timeline

The challenge: Financial assurance (bonds, trust funds) based on early estimates may be inadequate

Real example (anonymized):

Mine in US with closure bond of $22M based on 2005 estimates

Company went bankrupt in 2019, site entered regulatory receivership

Actual closure cost assessment: $67M

Gap covered by: State/federal funds (i.e., taxpayers)

Root cause analysis identified: Original closure cost estimates based on overly optimistic assumptions about:

- Consolidation and drainage rates

- Water treatment duration

- Natural revegetation success

- Post-closure monitoring needs

The cascade: Wrong technical assumptions → Wrong cost estimates → Inadequate financial assurance → Taxpayer liability

The Fifth Cascade: Constraint on Future Decisions

The Fifth Cascade: Constraint on Future Decisions

Perhaps the most insidious effect: Early assumptions constrain future options.

Path Dependency: When You’re Locked Into Early Decisions

Once facility is built based on certain assumptions, you’re committed:

Example:

Design assumption: Centerline construction method appropriate (based on assumed consolidation rates and stability margins)

Built: First 20m of facility using centerline method

Discover years later: Consolidation slower than assumed, stability margins less than predicted, centerline becoming challenging

Question: Should we switch to downstream method for remaining construction?

Challenge: Very difficult/expensive to transition methods mid-facility-life

Result: Often forced to continue with original method (because changing is too disruptive), implementing compensatory measures instead (enhanced drainage, slower raise rates, more monitoring)

You’re locked into the path set by original assumptions.

Foreclosed Options: What You Can’t Do Because of Earlier Decisions

Real scenario from gold mine in Africa:

Design assumption (2005): Mine life 12 years, tailings facility designed for corresponding volume

Reality (2015): Ore body larger than originally estimated, mine life could extend to 22 years

Question: Can we expand tailings facility to accommodate?

Investigation revealed:

- Original design optimized for 12-year volume (steep slopes, tight footprint)

- Expanding would require:

- Flattening slopes (major regrading)

- Expanding footprint (additional permitting, land acquisition, community displacement)

- Buttressing existing structure (expensive)

- Alternative: Build second facility nearby (but best sites already used for other infrastructure)

Total cost to extend mine life: $180M, of which $90M was tailings-related (dealing with original facility designed for wrong assumptions about mine life)

If mine life uncertainty recognized in original design: Could have designed for flexibility (staged construction, footprint reserved for expansion): Additional upfront cost perhaps $20M

The lesson: Early assumptions that prove wrong can make future adaptations extremely expensive or even infeasible

How to Break the Cascade: Assumption Management

How to Break the Cascade: Assumption Management

GISTM requires updating the DBR when assumptions change. But how do you actually manage assumptions?

Step 1: Make Assumptions Explicit and Visible

Instead of: “Tailings permeability is k = 1×10⁻⁶ cm/s”

Document as:

- Assumption: Tailings permeability k = 1×10⁻⁶ cm/s

- Basis: Laboratory testing on 12 samples from similar deposit

- Uncertainty: ±1 order of magnitude (limited samples, different source material, lab vs. field conditions)

- Validation plan: Back-calculate permeability from first 2 years of piezometer data and compare to assumption

- Implications if wrong: [List affected design elements: consolidation rates, pore pressure predictions, water management, etc.]

- Contingency: If actual permeability >3× different, trigger design review of affected systems

The difference: Assumption is flagged as assumption, uncertainty is quantified, validation is planned, and implications are understood

Step 2: Create an Assumption Register

Don’t bury assumptions in technical reports. Track them explicitly.

Assumption register includes:

| Assumption ID | Description | Basis | Uncertainty | Design Elements Affected | Validation Status | Current Assessment |

|---|---|---|---|---|---|---|

| GEO-001 | Foundation permeability k=1×10⁻⁵ cm/s | 8 field tests | ±50% | Seepage, underdrains | Validated 2015 | Confirmed accurate |

| GEO-002 | Tailings φ’ = 28° | 5 lab tests | ±3° | Stability, slopes | Validation ongoing | Appears conservative |

| HYD-001 | Design storm 145mm/24hr | Regional analysis | High (limited record) | Spillway, freeboard | Not yet validated | Exceeded 2019 (168mm) - under review |

| OPS-001 | Beach slope 1.5% | Modeling + analogue | Medium | Deposition strategy | Validated 2014 | Actual slopes 2-3% - investigating |

This register:

- Lives as active document (updated regularly)

- Reviewed during DSR and risk assessments

- Triggers reviews when validation shows assumption wrong

- Provides audit trail of how understanding evolved

Step 3: Design for Assumption Uncertainty

Step 3: Design for Assumption Uncertainty

When assumptions have high uncertainty, design should accommodate:

Option 1: Conservative design

- Use pessimistic assumptions

- Creates larger safety margins

- More expensive upfront

- Robust to assumption being wrong

Option 2: Flexible design

- Design for current best estimate

- BUT maintain flexibility to modify if assumptions prove wrong

- Reserve footprint for upgrades

- Use staged construction

- Plan contingency measures

Option 3: Adaptive design

- Design based on current assumptions

- Plan specific triggers for validation

- Pre-design contingency responses

- Implement modifications as better data obtained

Example of flexible design:

High-uncertainty assumption: Long-term water treatment duration (could be 10 years or 50 years depending on geochemical behavior)

Inflexible approach: Design and build water treatment plant for 10-year life (hope for best)

Flexible approach:

- Design treatment system with modular capacity

- Reserve land and services for expansion

- Use leased/mobile equipment initially (can extend if needed)

- Plan triggers for decision about permanent vs. extended temporary treatment

Result: Can adapt to reality as it emerges

Step 4: Systematic Validation Program

Step 4: Systematic Validation Program

For each major assumption, plan how and when you’ll validate it:

Validation methods:

- Direct measurement: Instrument and monitor to measure actual value

- Back-calculation: Use observed performance to calculate actual properties

- Comparative analysis: Compare predictions to observations

- Independent testing: Additional investigations as facility develops

Validation timing:

- Early: First 1-2 years of operations (catch major discrepancies early)

- Periodic: Every 3-5 years (check for drift or changing conditions)

- Triggered: When unusual performance observed

Real example of systematic validation:

Mine implemented “Assumption Validation Program”:

Year 1-2: Intensive data collection to validate major assumptions

- Continuous piezometer monitoring → back-calculate permeability

- Settlement monitoring → validate consolidation predictions

- Beach slope mapping → validate deposition modeling

- Water balance tracking → validate hydrological assumptions

Results documented in “Assumption Validation Report”:

- 12 major assumptions reviewed

- 8 confirmed within expected ranges

- 3 showed modest deviation (10-30%) - design implications assessed, minor modifications made

- 1 showed significant deviation (permeability 3× lower than assumed) - triggered detailed review and design updates

Cost of program: $1.2M over 2 years

Value: Caught several issues early when corrections were relatively inexpensive, prevented larger problems later

Step 5: Update the DBR (Actually Do It)

Step 5: Update the DBR (Actually Do It)

GISTM Requirement 4.8 says update DBR when assumptions change.

In practice, many operations don’t do this systematically because:

- “We’ll update it at next major modification”

- “The assumption is close enough”

- “Updating the DBR is expensive and time-consuming”

- “Operations are working fine, why create extra work?”

Result: DBR gradually becomes historical document reflecting original design, not current understanding

Better practice:

Tier 1 update (Minor): Assumptions validated without significant change

- Document validation results in appendix to DBR

- Confirm design remains appropriate

- Frequency: After validation activities (every 2-3 years)

- Effort: Days to weeks

Tier 2 update (Moderate): Assumptions changed modestly

- Update affected sections of DBR

- Document implications and any compensatory measures

- Update relevant drawings/analyses

- Frequency: As needed when assumptions shift

- Effort: Weeks to months

Tier 3 update (Major): Assumptions significantly wrong, design implications substantial

- Comprehensive DBR revision

- Re-analysis of affected systems

- Potentially major design modifications

- ITRB/reviewer involvement

- Frequency: Rare (hope never needed, but must be done if required)

- Effort: Months

The key: Don’t let perfect be enemy of good. Even minor updates maintain connection between design documents and current understanding.

The Accountability Question

The Accountability Question

As Accountable Executive, ask yourself:

Do I know what the critical design assumptions are for our tailings facility?

Not just in general—specifically:

- What material properties were assumed?

- What environmental conditions were assumed?

- What operational practices were assumed?

- What closure behavior was assumed?

Can I answer:

- Which assumptions have been validated against actual performance?

- Which assumptions have proven wrong or questionable?

- What are the implications if key assumptions are significantly off?

- When was the DBR last updated to reflect current understanding?

If you can’t answer these questions, you have invisible risk.

Because assumptions made years ago—possibly before you were in your role, possibly by people no longer with the company—are still constraining decisions and affecting safety today.

The cascade is running. The question is whether you’re managing it.

The Bottom Line: Assumptions Are Living Things

Design assumptions aren’t static facts. They’re provisional hypotheses that should be:

- Explicitly documented with their uncertainty

- Systematically validated against actual performance

- Updated when evidence suggests they’re wrong

- Tracked over the facility lifecycle

When this doesn’t happen:

- Wrong assumptions persist invisibly

- They cascade through interconnected systems

- They create compounding costs

- They constrain future options

- They increase risk

GISTM gives you the framework: Update the DBR when assumptions change.

But you have to create the system that:

- Identifies assumptions

- Tracks them

- Validates them

- Recognizes when they’ve changed

- Triggers the update

Without that system, you’re flying on decades-old assumptions that might have been reasonable once but may no longer reflect reality.

And the cascade continues, invisibly, until something forces it into the light.

Usually not in a good way.

Does your GISTM compliance system track design assumptions and their evolution, or just store static design documents? [Discover platforms that maintain living design bases that evolve with your facility]